MERmaid

A multi-year, multimodal Music Emotion Recognition system with robustness and microservices architecture, involving multiple MSc and BSc works.

Overview

MERmaid is a multi-year research project developing a multimodal Music Emotion Recognition (MER) system. It focuses on robustness, modular design through microservices, and combining multiple modalities (audio, lyrics, metadata) for emotion prediction.

The project acts as an umbrella for several MSc theses and BSc works, each contributing specific components, evaluations or architectural improvements to the overall system.

Research context

Music Emotion Recognition is an active research area pursued by the MIR group, of the CISUC R&D center. Existing approaches are normally buried in academic publications and public prototypes often lack robustness, modularity and support for multiple data modalities. Most systems are monolithic and difficult to extend or evaluate systematically.

System design

A microservices architecture where each component (data input,feature extraction, model inference) is independently developed and deployed. Multiple MSc and BSc students contribute specific modules, so the system grows incrementally while keeping architectural coherence.

Multi-year evolution

Each student thesis addresses a specific aspect:

- Ricardo António (2018–2019) — Proof-of-concept distributed MER system with microservices, message queues, YouTube as data source, and Essentia + SVM for classification.

- Tiago António (2020–2021) — Replaced the dummy ML models with proper MER logic for audio and lyrics, bridging software development and data science on top of Ricardo’s foundation.

- João Canoso (2020–2021) — Focused on orchestration: automated deployment, scaling, and management of the distributed system using containerisation and orchestration techniques.

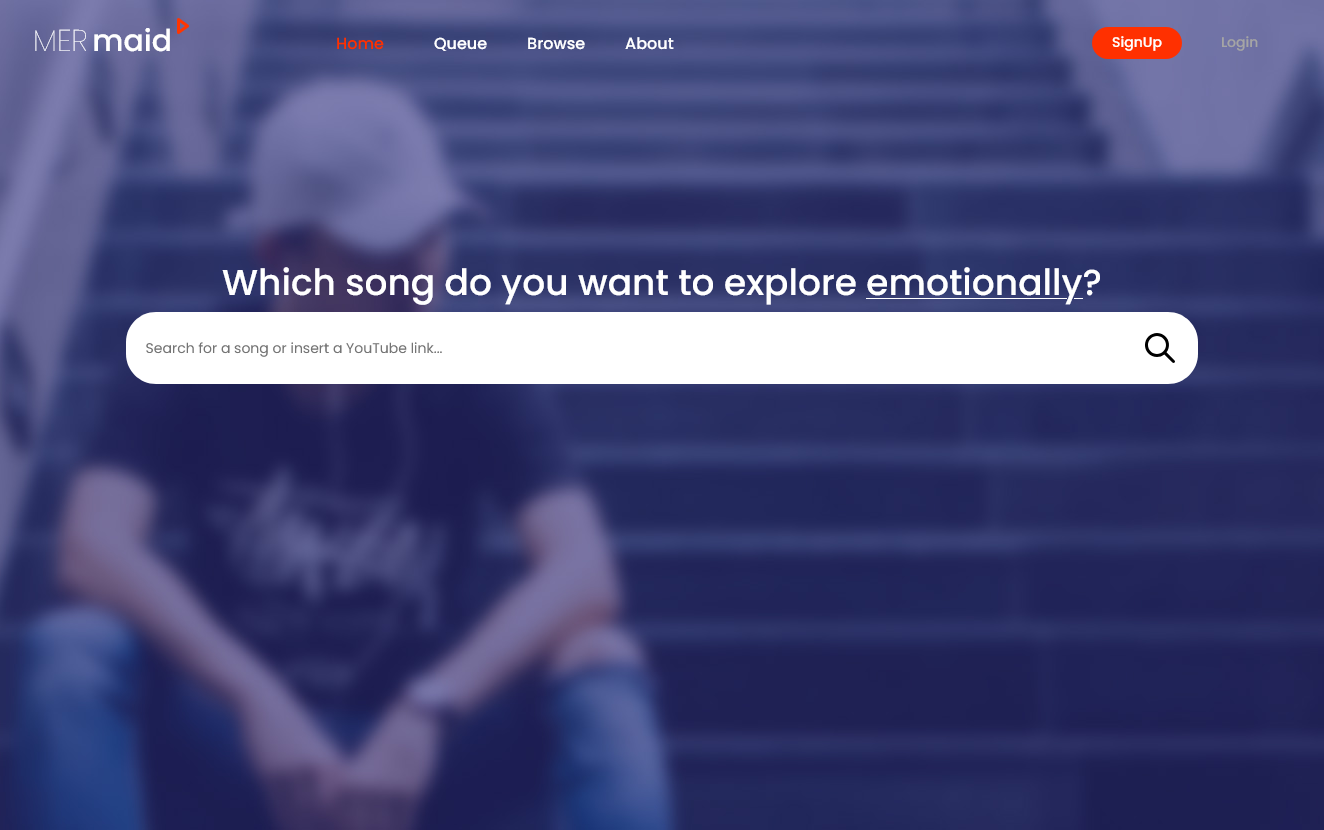

- Hélder Ribeiro (2023–2024) — Developed the MERmaid web app (v2): an Express.js API and React SPA with WebSockets, JWT authentication, rate limiting, and integration with the YouTube API and RabbitMQ broker. Currently live.

- Luís Costa (2024–2025) — MERmaid v0.3 (Deep Flow): improving system resilience and orchestration, adding source separation, voice analysis, and deep learning models, and refining private cloud deployment.

External collaboration

The project aggregates CISUC researchers and students, particularly in audio analysis and machine learning, connecting applied engineering at DataLab (development of practical applications) with fundamental research at CISUC (the ML/MER logic).